Queue Depth Is Not Enough: Adding Workload Metrics to BullMQ

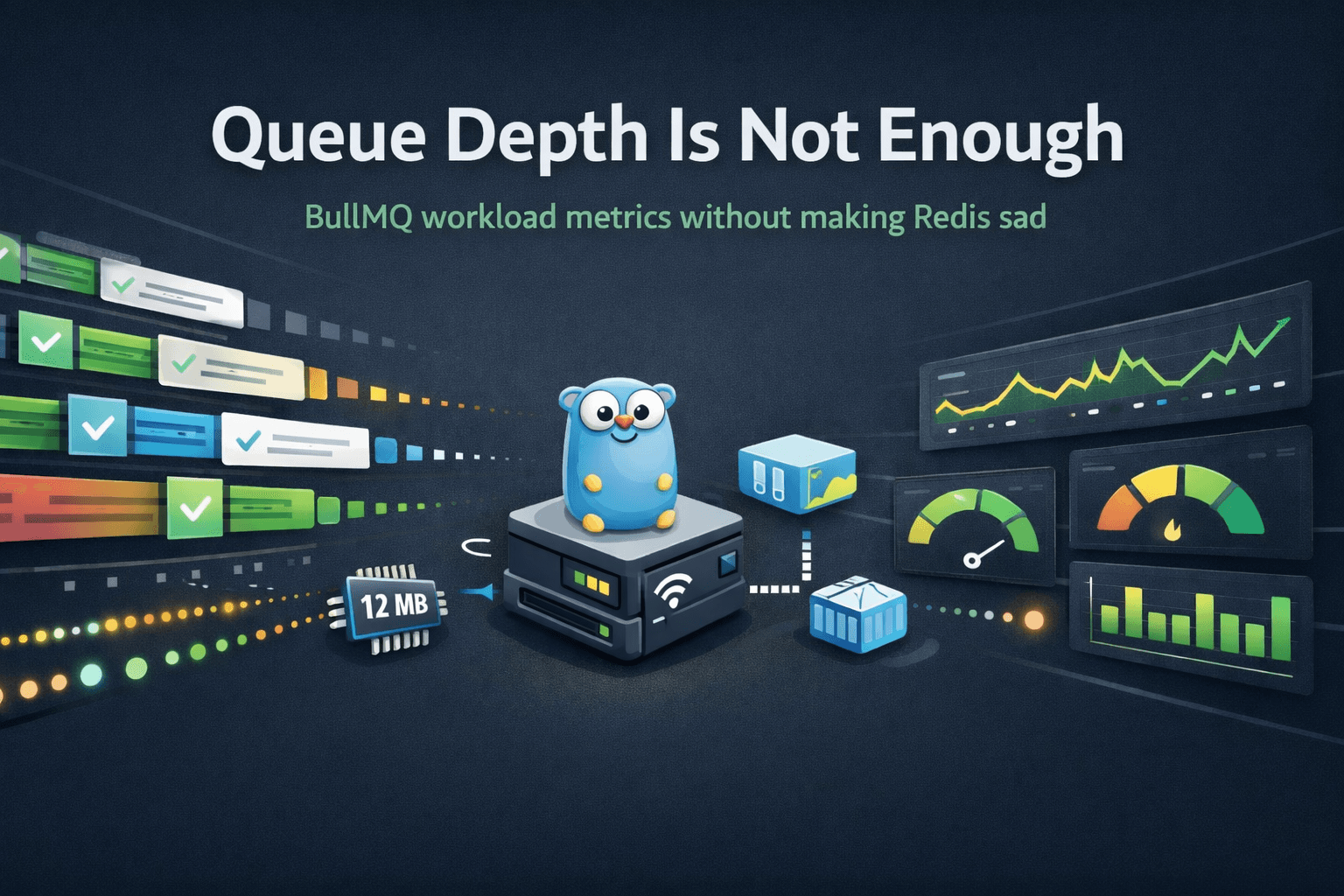

I built bull-der-dash because I wanted a simple, production-friendly way to see what was happening inside our BullMQ queues.

BullMQ is excellent. Redis-backed queues, retries, delayed jobs, priorities, parent-child flows: it gives you a lot of power. But once you start running real workloads through it, the operational questions get more specific.

At first, queue depth feels like enough.

How many jobs are waiting?

How many are active?

How many failed?

How many completed?

Those are useful questions, and they were the first things bull-der-dash answered. The dashboard could show live queue state, inspect jobs, expose Prometheus metrics, and run cheaply in Kubernetes.

But queue depth is not workload visibility.

Eventually I wanted to answer different questions:

- Which job types are running most often?

- Which jobs are slow?

- What is the p95 or p99 completion time by job name?

- Which workloads are failing?

- Are failures isolated to one queue or one job type?

- During a spike, what actually changed?

A queue can look healthy at the depth level while one specific workload is getting slower. A queue can drain quickly while hiding a rising failure rate. A completed count can tell you that work finished, but not whether the work took 50 milliseconds or 30 seconds.

That gap is what pushed me to add workload metrics.

The Tempting Bad Idea

BullMQ stores completed and failed jobs in Redis. Job hashes include useful fields like the job name and timestamps. In particular, BullMQ jobs expose fields such as:

nametimestampprocessedOnfinishedOnfailedReasonattemptsMade

So the obvious idea is simple:

- Scan the completed and failed job sets.

- Read each job hash.

- Calculate

finishedOn - processedOn. - Export success/failure counts and duration percentiles.

That would work in a demo.

It is also exactly the wrong shape for a production monitoring path.

The cost of that design grows with retained job history. If you retain thousands or millions of completed jobs, a metrics collector built around scanning retained jobs becomes more expensive as history grows.

Even worse, if that work happens during a Prometheus scrape, /metrics stops being a cheap in-memory export and becomes an accidental Redis workload.

That is not a small detail.

A monitoring tool should reduce uncertainty. It should not become part of the production problem.

The Performance Constraint

bull-der-dash already had a performance philosophy:

- frequently polled pages should stay cheap

- queue stats should use lightweight Redis commands like

LLENandZCARD - expensive diagnostics should stay off hot paths

/metricsshould export in-memory Prometheus data- Redis scans should be treated with suspicion

That philosophy matters because queues can be spiky. A normal day might look quiet, then suddenly fan out to hundreds or thousands of queued jobs.

In our case, peak fan-out can mean around 1,500 jobs waiting across BullMQ queues. That is not enormous by distributed systems standards, but it is enough that careless observability can become visible load.

The goal was not merely "add more metrics."

The goal was:

Add workload visibility without making Redis pay for every scrape.

That constraint shaped the whole design.

The Better Source of Truth: BullMQ Events

BullMQ already emits events.

For each queue, BullMQ writes to an event stream in Redis. Instead of repeatedly asking Redis, "What jobs have ever completed?", the collector can ask, "What has happened since I last checked?"

That changes the scaling model.

Bad model:

metric cost ~= retained jobs

Better model:

metric cost ~= newly finished jobs

That is the key design move.

The workload metrics collector now watches BullMQ event streams in the background. It listens for terminal job events, currently completed and failed.

The implementation lives in the internal/workloadmetrics package in the bull-der-dash repository. The important part is not a large framework or a complicated metrics pipeline; it is a small background collector that reads BullMQ events incrementally and records Prometheus metrics in memory.

When one appears, it reads only the fields it needs from the job hash:

HMGET bull:{queue}:{jobId} name processedOn finishedOn

Then it records Prometheus metrics in memory.

Prometheus scrapes /metrics, and /metrics does not need to query Redis to produce those workload metrics.

The flow looks like this:

BullMQ worker

|

v

Redis stream: bull:{queue}:events

|

v

bull-der-dash workload collector

|

+--> HMGET bull:{queue}:{jobId} name processedOn finishedOn

|

v

Prometheus counters and histograms

|

v

Grafana

This is the shape I wanted: incremental, cheap, and operationally understandable.

The Metrics

The first version exposes a small set of workload metrics.

The metric definitions are in internal/metrics, and the collector code is in internal/workloadmetrics.

Completed and failed job counts:

bullmq_jobs_finished_total{queue, name, result}

Job processing duration:

bullmq_job_completion_duration_seconds_bucket{queue, name, result, le}

bullmq_job_completion_duration_seconds_sum{queue, name, result}

bullmq_job_completion_duration_seconds_count{queue, name, result}

Collector health:

bullmq_workload_event_lag_seconds{queue}

bullmq_workload_events_read_total{queue, event}

bullmq_workload_events_dropped_total{queue, reason}

bullmq_workload_job_lookup_errors_total{queue, reason}

The name label is the BullMQ job name. The result label is either completed or failed.

That gives Grafana enough to answer the questions I actually care about.

For example, completed and failed jobs over a five-minute window:

sum by (queue, name, result) (

increase(bullmq_jobs_finished_total[5m])

)

p95 processing duration by queue and job name:

histogram_quantile(

0.95,

sum by (le, queue, name) (

rate(bullmq_job_completion_duration_seconds_bucket[5m])

)

)

Failure ratio by queue and job name:

sum by (queue, name) (

rate(bullmq_jobs_finished_total{result="failed"}[5m])

)

/

sum by (queue, name) (

rate(bullmq_jobs_finished_total[5m])

)

Collector lag:

bullmq_workload_event_lag_seconds

These are simple metrics, but they move the dashboard from "how deep are my queues?" to "what work is actually happening?"

Cardinality Still Matters

Adding name as a label is useful, but labels are not free.

Prometheus cardinality can get out of hand if labels contain unbounded values. A queue name is usually safe. A job name is often safe. Arbitrary job data is not.

So the collector does not label metrics by job payload, title, user ID, tenant ID, or anything else that might explode.

It also caps the number of job names per queue.

If a queue exceeds the configured job-name limit, additional job names are grouped under:

__other__

If a terminal event is observed but the job hash is already gone before the collector can read it, the count is still recorded under:

__unknown__

That case can happen if completed or failed jobs are removed aggressively. Counts still matter, but duration samples require processedOn and finishedOn.

Those guardrails are not glamorous, but they are the difference between useful production metrics and a Prometheus cardinality incident.

A Small Architecture Compromise

Originally, bull-der-dash leaned hard into being stateless.

That was the right default. Stateless HTTP handlers are easy to scale, easy to reason about, and easy to operate.

But event-derived metrics need a tiny bit of state:

- the last stream ID read per queue

- in-memory Prometheus counters and histograms

- bounded job-name tracking

So I refined the architecture rule.

The web request path stays stateless. The dashboard, job inspection pages, health checks, and /metrics export do not depend on session state.

But optional background collectors are allowed to maintain small, ephemeral telemetry state.

That is a practical distinction:

Stateless HTTP serving v. Ephemeral state for telemetry collection.

I did not add leader election or distributed coordination in the first version. The current deployment runs a single bull-der-dash instance, so a single in-process collector is the simplest correct design.

If we later run multiple replicas, the collector will need explicit ownership coordination or a separate single-replica deployment. Otherwise multiple pods could read the same BullMQ events and double-count.

That is a good future problem. It did not need to be solved on day one.

Why Go Has Been a Good Fit

One of the quiet wins in this project has been the choice to build bull-der-dash in Go.

In my environment, bull-der-dash sits around 12 MB RSS. A TypeScript-native BullMQ dashboard we compared against was closer to 500 MB RSS while offering less of the workload visibility we wanted.

That difference matters.

A monitoring tool should be cheap enough to leave running continuously. It should not become another meaningful workload to operate.

This is not a "Go beats TypeScript" argument. TypeScript is a great application language, and existing BullMQ dashboards are useful. The point is that for production-adjacent tooling, the operating model matters.

Go gives this project a nice shape:

- one small binary

- low idle memory

- simple container packaging

- straightforward Prometheus integration

- cheap background goroutines

- predictable Kubernetes footprint

That makes it easier to add observability without worrying that the observability tool itself is becoming expensive.

The most important performance feature of an internal monitoring tool might be that you barely notice it running.

What Changed Operationally

The rollout was intentionally boring.

The collector is behind a feature flag:

WORKLOAD_METRICS_ENABLED=true

The configuration is exposed through the Helm chart as well, so the same binary can run with workload metrics disabled by default and enabled per environment.

By default, it starts from new events only:

WORKLOAD_METRICS_START_ID=$

That means no startup backfill and no surprise Redis scan. Existing completed jobs are not retroactively counted by the workload metrics. The metrics reflect events observed while the collector is running.

That tradeoff is fine for operational dashboards.

For rollout, the sequence was:

- Enable in a local simulator environment.

- Confirm event reads.

- Confirm completed job counts.

- Confirm duration histograms.

- Watch collector lag.

- Deploy to demo.

- Build Grafana panels manually.

- Promote to production.

That is exactly the kind of rollout I want for production-adjacent tooling: incremental, visible, and easy to back out.

What I Can See Now

With the new metrics, Grafana can show:

- completed jobs by queue and job name

- failed jobs by queue and job name

- p95/p99 duration by workload

- failure ratios

- event collector lag

- lookup errors when job hashes disappear too quickly

- whether job-name cardinality is escaping expectations

That is a much better operational picture than queue depth alone.

Queue depth still matters. It tells me about backlog.

But workload metrics tell me about behavior.

That distinction is the whole point.

Project Links

The project is open source here:

If you are running BullMQ and want lightweight visibility into queue depth, job inspection, Prometheus metrics, and workload duration percentiles, I would love feedback or issues.

Closing Thought

The lesson from this work is not specific to BullMQ.

It is a general observability lesson:

The easiest metric to compute is not always the safest metric to collect.

For BullMQ, scanning retained jobs would have been easy to understand, easy to implement, and easy to regret.

Reading event streams incrementally is a better fit for the system. It gives us the workload visibility we need while preserving the performance characteristics that made bull-der-dash useful in the first place.

Observability should make production clearer.

It should not make Redis sad.